Archive

Photography Off the Scale is out

Our book Photography Off the Scale is out and available! Edited by myself and my FAMU colleague Tomáš Dvořák it offers an interdisciplinary perspective on the scale and quantity of images in contemporary visual culture. From the mass-image to post-photography, AI to online visual culture, planetary diagrams to LIDAR, the breadth of topics is wide. The book emerges from our Operational Images and Visual Culture project (FAMU, Prague – and funded by the Czech Science Foundation, 2019-2023).

Here are the really nice blurbs from Lev Manovich, Lisa Parks, and Peter Szendy:

“Among the many fundamental changes taking place in contemporary photography and media culture, probably the most important are changes in scale. The new magnitude of image production, the instant global dissemination of billions of new images, and the adoption of AI that turns these images into big data are only some examples of how the visual has been “scaled up” in the 21st century. Now we finally have a first book that rethinks the history and theory of photography through the lens of scale – and connects this concept to a range of others including measure, politics, gender, subjectivity, and aesthetics.”

– Lev Manovich, Presidential Professor, The Graduate Center, City University of New York

“Someone takes a picture somewhere in the world. Such a trivial action is multiplied by a trillion. Or much more, since the majority of pictures today are produced by machines for machines. This collection of essays brilliantly explores the unheard-of effects of scale on the ontology of photography and it touches upon the sublime of the infinity of digital images.”

– Peter Szendy, Brown University

“This book’s refreshing and much needed take on photography cuts through the infoglut and explores the apparatus, infrastructure, and operations of contemporary pictures. Addressing everything from snapshots to machine vision, Photography Off the Scale unfurls a vital field of technology, politics and aesthetics reshaping the world.”

– Lisa Parks, Distinguished Professor of Film & Media Studies, University of California-Santa Barbara

With Tomáš, we wrote a substantial introduction outlining the stakes of the approach – how it relates to scholarship in photography and links it to key questions of digital culture – and we are really pleased with the whole lineup of the book:

Introduction: On the Scale, Quantity and Measure of Images

Jussi Parikka & Tomáš Dvořák

Part I: Scale, Measure, Experience

1. The Mass Image, the Anthropocene Image, the Image Commons

Sean Cubitt

2. Beyond Human Measure: Eccentric Metrics in Visual Culture

Tomáš Dvořák

3. Living with the Excessive Scale of Contemporary Photography

Andrew Fisher

4. Feeling Photos: Photography, Picture Language and Mood Capture

Michelle Henning

5. Online Weak and Poor Images: On Contemporary Feminist Visual Politics

Tereza Stejskalová

Part II: Metapictures and Remediations

6. Photography’s Mise en Abyme: Metapictures of Scale in Repurposed Slide Libraries

Annebella Pollen

7. The Failed Photographs of Photography: On the Analogue and Slow Photography Movement

Michal Šimůnek

8. Strangely Unique: Pictorial Aesthetics in the Age of Image Abundance

Josef Ledvina

Part III: Models, Scans and AI

9. On Seeing Where There’s Nothing to See: Practices of Light Beyond Photography

Jussi Parikka

10. Planetary Diagrams: Towards an Autographic Theory of Climate Emergency

Lukáš Likavčan & Paul Heinicker

11. Undigital Photography: Image-Making Beyond Computation and AI

Joanna Zylinska

12. Coda: Photography in the Age of Massification

Joan Fontcuberta & Geoffrey Batchen

The book is published by Edinburgh University Press and is part of their Technicities book series. A special thanks to Elise Hunchuck for her outstanding expertise in helping us to fine-tune the writing and to Abelardo Gil-Fournier for the cover image that comes from his project Bildung.

For any inquiries about the book, review copy requests, etc, please contact me or Tomáš.

Expert-Readable Images

Welcome to our Operational Images project event on Expert-Readable Images at the end of November in Prague at FAMU part of the Academy for Performing Arts. Please find below a short description of the event and list of invited speakers. The event is organised by Dr Tomáš Dvořák (FAMU) and myself together with the project team.

Expert-Readable Images

November 29, 2019 – FAMU in Prague

While machine-readable images have become a constant reference point for photographic theory and contemporary visual media studies, our event turns to the question: what are the specialised expert-readable image practices that cater to the technical specifics, institutional demands, and particular knowledge-roles of visual culture?

Recent discussions of operational images as well as technical media and visual cultures often invoke the distinction between human and machine vision. Automated visual systems are claimed to produce images by and for machines, pictures that are unreadable or even invisible to human eyes. Our conference seeks to complicate this dichotomy by addressing the field of professional perceptual skills, trained judgment, and expert practices of observation and instruction. Should we consider specific thought styles (to borrow a term from Ludwik Fleck) and thought collectives that develop simultaneously with the technologies of instrumental imaging and visualizing? What does a doctor see in a CT scan? What does a drone operator see on a monitor? What does a statistician see in a graph? What does a forensic analyst see in a digital model? What does a content moderator see in our holiday memories? What are the particular cultural techniques of practice, of training, and operation that govern these relations to images?

The one day day conference gathers specialists from fields of media, visual culture, photography and science and technology studies (STS) to engage with the world of specialised technical images. We shift the focus from machines to the training and governance of humans who deal with those images.

For any queries, please get in touch via email at jussi.parikka@famu.cz.

Poster design: Abelardo Gil-Fournier.

Machine Learned Futures

We are with Abelardo Gil-Fournier writing a text or two on questions of temporality in contemporary visual culture. Our specific angle is on (visual) forms of prediction and forecasting as they emerge in machine learning: planetary surface changes, traffic and autonomous cars, etc. Here’s the first bit of an article on the topic (forthcoming later we hope, both in German and English).

“’Visual hallucination of probable events’, or, on environments of images and machine learning”

I Introduction

Contemporary images come in many forms but also, importantly, in many times. Screens, interfaces, monitors, sensors and many other devices that are part of the infrastructure of knowledge build up many forms of data visualisation in so-called real-time. While data visualisation might not be that new of a technical form of organisation of information as images, it takes a particularly intensive temporal turn with networked data that has been discussed for example in contexts of financial speculation.[1] At the same time, these imaging devices are part of an infrastructure that does not merely observe the microtemporal moment of the “real”, but unfolds in the now-moment. In terms of geographical, geological and broadly speaking environmental monitoring, the now moment expands in to near-future scenarios in where other aspects, including imaginary are at play. Imaging becomes a form of nowcasting, exposing the importance of understanding change changing.

Here one thinks of Paul Virilio and how “environment control” functions through the photographic technical image. In Virilio’s narrative the connection of light (exposure), time and space are bundled up as part of the general argument about the disappearance of the spatio-temporal coordinates of the external world. From the real-space we move to the ‘real-time’ interface[2] and to analysis of how visual management detaches from the light of the sun, the time of the seasons, the longue duree of the planetary qualitative time to the internal mechanisms of calculation that pertain to electric and electronic light. Hence, the photographic image that is captured prescribes for Virilio the exposure of the world: it is an intake of time, and, an intake of light. Operating on the world as active optics, these intakes then become the defining temporal frame for how environments are framed and managed through operational images, to use Harun Farocki’s term, and which then operationalize how we see geographic spaces too. The time of photographic development (Niepce), or “cinematographic resolution of movement” (Lumière), or for that matter the “videographic high definition of a ‘real-time’ representation of appearances”[3] are part of Virilio’s broad chronology of time in technical media culture.

But what is at best implied in this cartography of active optics is the attention to mobilization of time as predictions and forecasts. For operations of time and production of times move from meteorological forecasting to computer models, and from computer models to a plethora of machine learning techniques that have become another site of transformation of what we used to call photography. Joanna Zylinska names this generative life of photography as its nonhuman realm of operations that rearranges the image further from any historical legacy of anthropocentrism to include a variety of other forms of action, representation and temporality.[4] The techniques of time and images push further what counts as operatively real, and what forms of technically induced hallucination – or, in short, in the context of this paper, machine learning – are part of current forms of production of information.

Also in information society, digital culture, images persist. They persist as markers of time in several senses that refer not only to what the image records – the photographic indexicality of a time passed nor the documentary status of images as used in various administrative and other contexts – but also what it predicts. Techniques of machine learning are one central aspect of the reformulation of images and their uses in contemporary culture: from video prediction of the complexity of multiple moving objects we call traffic (cars, pedestrians, etc.) to satellite imagery monitoring agricultural crop development and forest change. Such techniques have become one central example of where earth’s geological and geographical changes become understood through algorithmic time, and also where for instance the very rapidly changing vehicle traffic is treated alike as the much slower earth surface durations of crops. In all cases, a key aspect is the ability to perceive potential futures and fold them into the real-time decision-making mechanisms.

The computational microtemporality takes a futuristic turn; algorithmic processes of mobilizing datasets in machine learning become activated in different institutional context as scenarios, predictions and projections. Images run ahead of their own time as future-producing techniques.

Our article is interested in a distinct technique of imaging that speaks to the technical forms of time-critical images: Next Frame Prediction and the forms of predictive imagining employed in contemporary environmental images (such as agriculture and climate research). While questions about the “geopolitics of planetary modification”[5] have become a central aspect of how we think of the ontologies of materiality and the Earth as Kathryn Yusoff has demonstrated, we are interested in how these materialities are also produced on the level of images.

Real time data processing of the Earth not as a single view entity, but an intensively mapped set of relations that unfold in real time data visualisations becomes a central way of continuing the earlier more symbolic forms of imagery such as the Blue Marble.[6] Perhaps not deep time in the strictest geological terms, agricultural and other related environmental and geographical imaging are however one central way of understanding the visual culture of computational images that do not only record and represent, but predict and project as their modus operandi.

This text will focus on this temporality of the image that is part of these techniques from the microtemporal operation of Next Frame Prediction to how it resonates with contemporary space imaging practices. While the article is itself part of a larger project where we elaborate with theoretical humanities and artistic research methods the visual culture of environmental imaging, we are unable in this restricted space to engage with the multiple contexts of this aspect of visual culture. Hence we will focus on the question of computational microtime, the visualized and predicted Earth times, and the hinge at the centre of this: the predicted time that comes out as an image. The various chrono-techniques[7] that have entered the vocabulary of media studies are particularly apt in offering a cartography of what analytical procedures are at the back of producing time. Hence the issue is not only about what temporal processes are embedded in media technological operations, but what sounds like merely a tautological statement: what times are responsible for a production of time. What times of calculation produce imagined futures, statistically viable cases, predicted worlds? In other words, what microtemporal times are in our case at the back of a sense of a futurity that is conditioned in calculational, software based and dataset determined system?

[1] Sean Cubitt, Three Geomedia, in: Ctrl-Z 7, 2017.

[2] Paul Virilio, Polar Inertia, London-Thousand Oaks-New Delhi, 2000, S. 55.

[3] Ebenda, S.61

[4] See Joanna Zylinska, Nonhuman Photography, Cambridge (MA) 2017.

[5] Kathryn Yusoff, The Geoengine: geoengineering and the geopolitics of planetary modification, in: Environment and Planning a 45, 2013, S. 2799-2808.

[6] See also Benjamin Bratton, What We Do Is Secrete: On Virilio, Planetarity and Data Visualisation, in: John Armitage/Ryan Bishop (Hg.), Virilio and Visual Culture, Edinburgh 2013, S. 180–206, hier S. 200-203.

[7] Wolfgang Ernst, Chronopoetics. The Temporal Being and Operativity of Technological Media, London-New York 2016.

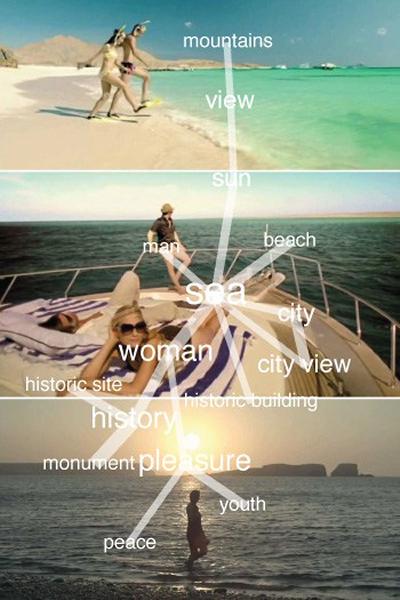

Image may contain

Visual culture nowadays.

The Steganographic Image

It’s the Conspiracy week at the Photographers’ Gallery in London and I was asked to write a short text on what lies inside the image (code). In other words, I wrote a short text on the Steganographic Image, and hiding messages in plain sight, although in this case, encoded “inside” a digital image. The image that tricks, the image that operates behind your back, or more likely, triggers processes front of your eyes, in plain sight, invisible. As I was reminded, this is also an idea that Akira Lippit has in a different context developed through Derrida. To quote Lippit (quoting at first Derrida): ‘”Visibility,” he says “is not visible.” Invisibility is folded into the condition of visibility from the beginning. There is no visibility that is not also invisible, no visibility that is not in some way always spectral.’One would be tempted to argue that this is where this consideration of the visual meets up with the history of cryptography, or ciphering and deciphering. Or as Francis Bacon put it in 1605 in ways part of the longer media archaeology of the steganographic image too: “The virtues of ciphers are three: that they be not laborious to write and read; that they be impossible to decipher; and, in some cases, that they be without suspicion.” It is especially this third virtue that remains of interest when looking at images without such suspicion: the most banal, tedius of pictures; a spectrality that conjurs up hidden passages, triggers and operations.

My short text can be found here online. It’s only scratching the steganographic surface.

A short preview of the text.

Hidden in Plain Sight: The Steganographic Image

Who knows what went into an image, what it includes and what it hides? This is not merely a question of the fine art historical importance of materials, nor even a media historical intrigue of chemistry, but one of steganography – hiding another meaningful pattern, perhaps a message, in data; inside text or an image. This image that is always more than. More than what? Isn’t it obvious from the amount of work gone into art-theoretical considerations of the inexhaustible meanings of the photographic image that it has always been a multiplicity: contexts, fluctuating meanings, readings and the insatiable desire to look at things in order to discover its depths.

As such, a steganographic inscription is neither a depth nor the plain surface but somewhere in between. In contemporary images made of data it refers to how the image can be coded as more than is seen, but also more than the image should do. The steganographic digital image can be executed; it includes instructions for the computer to perform. Photographs as part of a longer history of communication media are one particular way of saying more than meets the eye, but this image also connects to histories of secret communication from the early modern period, to more recent discussions in security culture, as well as fiction such as William Gibson’s novel Pattern Recognition (2003). Were J.G. Ballard’s 1950s billboard mysteries one sort of cryptographic puzzle that hid a message in plain visual sight?

Data Asymmetry – a Burak Arikan exhibition in Winchester

I am happy to announce that the Turkish artist, technologist Burak Arikan’s exhibition Data Asymmetry opens in November at the Winchester Gallery (at the Winchester School of Art).

I am happy to announce that the Turkish artist, technologist Burak Arikan’s exhibition Data Asymmetry opens in November at the Winchester Gallery (at the Winchester School of Art).

The exhibition addresses critical mapping as a way to understand data culture. The pieces raise questions about the predictability of ordinary human behavior with MyPocket (2008); revealing insights into the infrastructure of megacities like Istanbul as a network of mosques, republican monuments and shopping malls (Islam, Republic, Neoliberalism, 2012) ; remapping and organising recurring patterns in the official tourism commercials of governments with Monovacation (2012); exploring the growth of networks via visual and kinetic abstraction with Tense Series (2007-2012); and showcasing collective production of network maps from the Graph Commons platform. As the works emphasise, the aim of the Graph Commons is to empower people and projects through using network mapping, and collectively experiment with mapping as an ongoing practice.

Previously Arikan has had his work shown at venues such as the Museum of Modern Art New York, Venice Architecture Biennale, São Paulo Biennial, Istanbul Biennial, Berlin Biennial, Ars Electronica and many others.

The exhibition opens November 10.

In addition to the exhibition in the Winchester gallery, Arikan is organising a workshop on critical mapping and network graphs at the Winchester School of Art.

Arikan’s visit also includes another workshop in London at the British Library and as part of the Internet of Cultural Things-project. The visit to Winchester is also supported by the AMT research group at WSA.

For more context on Arikan’s art practice, please find here an audio interview I did with Arikan on stage at transmediale 2016 in Berlin.

For information and queries, please contact me: Contact details.

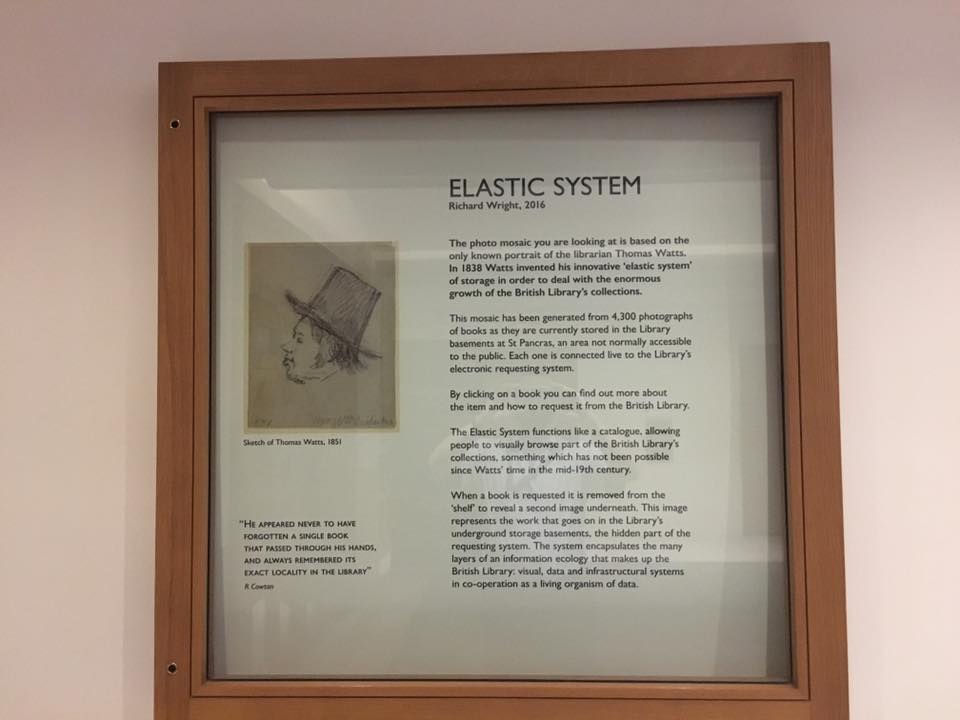

The Elastic System – Data in a Cultural Institution

One of the milestones in our Internet of Cultural Things-project (AHRC: AH/M010015/1) was the launch of artist Richard Wright’s Elastic System. With an interesting media archaeological angle, the art project creates an alternative visual browsing/search/request system on top of the existing British Library one. As an experimental pilot, this interface (an installation and soon an online version) returns the library to an age of browsable, visual access to books.

The King’s Library at the British Library in 1851. Now the King’s Library tower is the only permanently publicly exhibited collection at the BL. Source: suzanne-historybritishlibrary.blogspot.co.uk

While still in the middle of the 19th century the library space could be seen more as a public space with visual access to the collections, the modern storage and delivery systems at the BL created a different sort of a spatial setting. The sheer increase in the number of items in its holdings necessitated this change that could be easily seen as a precursor to the issues the more recent information culture has had to face: lots of stuff that needs to be stored, equipped with an address, and locatable. The short animation Knowledge Migration by Richard Wright is one way to visualize the growth in acquisitions on a geographically mapped timeline. The video is a short animation made by Richard Wright, showing “each item’s place and date of publication (or date of acquisition where available) since the library’s foundation in 1753.” Knowledge Migration used a random sample of 220,000 records from the print catalogue.

The current reality of the British Library as a data institution can be approached through its infrastructure, also the many datasets and systems, including the ABRS (Automated Book Requesting System); this infrastructure includes both the data based systems and digital catalogues, online interface and searchable collections, their automated robotic systems in Boston Spa storage/archive space and also the important human labour that is part of this automated system.

The Elastic System project introduction by Wright states:

“ELASTIC SYSTEM is a database portrait of the librarian Thomas Watts. In 1838 Watts invented his innovative “elastic system” of storage in order to deal with the enormous growth of the British Library’s collections.

The mosaic image of Watts has been generated from 4,300 books as they are currently stored in the library basements at St Pancras, an area not normally accessible to the public. Each one is connected live to the library’s electronic requesting system.

The Elastic System functions like a catalogue, allowing people to visually browse part of the British Library’s collections, something which has not been possible since Watts’ time. When a book is requested it is removed from the “shelf” to reveal a second image underneath, an image that represents the work that goes on in the library’s underground storage basements, the hidden part of the modern requesting system.”

You can view and use the installation system at the British Library in London until September 23, 2016 – it is located at the front of the Humanities Reading room (during library opening hours).

The online version will be launched in the near future.

Here’s Richard Wright’s blog post about his artistic residency at the British Library as part of our project: Elastic System: How to Judge a Book By Its Cover.

We are discussing these themes in Liverpool on September 14, 2 pm, at FACT – this panel on cultural data is part of the Liverpool Biennial public programme.

A thank you to Aquiles Alencar-Brayner (BL, Digital Curator) for the snapshots of the texts above.

Autonomous AI as Weapons, Policy and Economy

WIth my colleague Ryan Bishop we did some popular writing over the summer and responded to the recent call to ban autonomous weapons systems. The open letter was widely discussed but usually with the same emphases, so we wanted to add our own flavour to the debate. What if they are already here? What if the media archaeology of autonomous weapons goes way back to the experimental weapons development started during the Cold War?

Here’s our short piece in The Conversation. It was rather heavily edited so I took the liberty to paste below the longer original version (not copyedited though).

__

Ryan Bishop and Jussi Parikka, Winchester School of Art/University of Southampton

Autonomous AI as Weapons, Policy and Economy

A significant cadre of scholars and corporate representatives recently signed an open letter to “ban on offensive autonomous weapons systems.” The letter was widely publicised and supported by well-known figures from Stephen Hawking to Noam Chomsky, corporate influentials like Elon Musk, Google’s leading AI researcher Demis Hassabis and Apple co-founder Steve Wozniak. The letter received much attention in the news and social media with references to killer AI robots and mentions of The Terminator, adding a science-fictional flavour. But the core of the letter referred to an actual issue having to do with the possibilities of autonomous weapons becoming a wide-spread tool in larger conflicts and in various tasks “such as assassinations, destabilizing nations, subduing populations and selectively killing a particular ethnic group.”

One can quibble little with the consciences on display here even if scholars such as Benjamin Bratton already earlier argued that we need to be aware of much wider questions about design and synthetic intelligence. Such issues cannot be reduced to the Terminator-imaginary and narcissistically assume that AI is out there to get us. Scholars should anyway address the much longer backstory to autonomous weapons systems that make the issue as political as it is technological.

The letter concludes with the semi-Apocalyptic and not altogether inaccurate assertion that “The endpoint of this technological trajectory is obvious: autonomous weapons will become the Kalashnikovs of tomorrow. The key question for humanity today is whether to start a global AI arms race or to prevent it from starting.” However this not the endpoint but rather it is the starting point.

Unfortunately the AI global arms race has already started. The most worrying dimension of this AI arms race is that it does not always look like one. The division between defense and offensive weapons was already blurred during the Cold War.

The doctrine for pre-emptive strike laid waste to the difference between the two. The agile capacity to reprogram autonomous systems means all systems can be altered with relative ease, and the offensive/defensive distinction disappears even more fully.

The new weapons systems can look like the Planetary Skin Institute or the Central Nervous System for the Earth (by Hewlett-Packard), two of the many autonomous remote sensing systems that allow for automated real-time responses to the conditions they are meant to track. And to act on that information. Automatically.

In the present, platforms for planetary computing operate with and through remote sensing systems that gather together real-time data and of the earth for specific stakeholders through models and simulations. A system such as the Planetary Skin Institute, initiated by NASA and Cisco Systems, operates under the aegis of providing a multi-constituent platform for planetary eco-surveillance. It was originally designed to offer a real-time open network of simulated global ecological concerns, especially treaty verification, weather crises, carbon stocks and flows, risk identification and scenario planning and modeling for academic, corporate and government actors (thus replicating the US post World War II infrastructural strategy). It is within this context of autonomous remote sensing systems that AI weaponry must be understood; the hardware and software, as well as overall design and implementation, are the same for each. Similarly provenance for all of these resides primarily in Cold War systems designs and goals.

The Planetary Skin institute now operates as an independent non-profit global R & D organization with its stated goal of being dedicated to “improving the lives of millions of people by developing risk and resource management decision services to address the growing challenges of resource scarcity, the land-water-food-energy-climate nexus and the increasing impact and frequency of weather extremes.” It therefore claims to provide a “platform to serve as a global public good,” thus articulating a position and agenda as altruistic as can possibly be imagined. The Planetary Skin Institute works with “research and development partners across multiple sectors regionally and globally to identify, conceptualize, and incubate replicable and scalable big data and associated innovations, that could significantly increase the resilience of low-income communities, increase food, water, and energy security and protect key ecosystems and biodiversity”. What it does not to mention is the potential for resource futures investment that could accompany such data and information. This reveals the large-scale drive from all sectors to monetize or weaponize all aspects of the world.

The Planetary Skin Institute’s system echoes what a number of other remote automated sensing systems provide in terms of real-time, tele-tracking occurrences in many parts of the globe. The slogan for the institute is “sense, predict, act,” which is what AI weapons systems do, automatically and autonomously. Autonomous weapons are said to be “a third revolution in warfare, after gunpowder and nuclear arms” but such capacities for weapons have been around since at least 2002. At that time drones transitioned to being “smart weapons” and thus enabled to select their own targets to fire on (usually using GPS locations on hand-held devices). Geolocation based on SIM cards is now also used in U.S. drone assassination operations.

Instead of only about speculations concerning the future, autonomous systems have an institutional legacy as part of the Cold War. They are part of our inheritance from WWII and Cold War complex systems interacting between university, corporate and military based R&D. Such agencies as the American DARPA are the legacy of the Cold War, founded in 1958 but still very active as a high risk, high gain-sort of a model for speculative research.

The R&D innovation work is also spread out to the wider private sector through funding schemes and competitions. This illuminates essentially the continuation of the Cold War schemes also in the current private sector development work: “the security industry” is already structurally so tied to the governmental policies, military planning and to economic development that to ask about banning AI weaponry is to point to the wider questions about the political and economic systems that support military technologies as economically lucrative area of industry. Author E.L. Doctorow once summarised the nuclear bomb in relation to its historical context in the following manner: “First, the bomb was our weapon. Then it became our foreign policy. Then it became our economy.” We need to be able to critically evaluate the same triangle as part of autonomous weapons development that is not merely about the technology but indeed about policies and politics, and increasingly, economies and economics.

The Consortium: On Autonomous Sensing

Last Spring we at #WSA started a consortium partnership with two other universities: University of California (San Diego) and Parsons School of Design (New School, NYC). Together with Jordan Crandall and Benjamin Bratton from USCD and Ed Keller from Parsons we are addressing themes such as machine perception, remote sensing, synthetic intelligence, etc. We are also organising a panel for transmediale 2015 – and Jordan Crandall will be in performing his drone performance Unmanned.

But already before transmediale at the end of this month, we are organising a group meeting and a public panel this week in San Diego under the rubric of “Autonomous: Sensing“.

Last Spring, we organised a panel in Winchester on Design and Contemporary Technological Realities and we aim to continue these meetings alongside some publications and curating exhibitions with the consortium partners.

— update —

Below some pictures from the San Diego-event and our panel.

The Warhol Forensics

The news about the (re)discovered Andy Warhol-images, excavated by digital forensics means, has been making rounds in news and social media. In short, a team of experts – including Cory Arcangel – discovered Warhol’s Amiga-paintings from 1985 floppy discs. As described in the news story: ”

“Warhol’s Amiga experiments were the products of a commission by Commodore International to demonstrate the graphic arts capabilities of the Amiga 1000 personal computer. Created by Warhol on prototype Amiga hardware in his unmistakable visual style, the recovered images reveal an early exploration of the visual potential of software imaging tools, and show new ways in which the preeminent American artist of the 20th century was years ahead of his time.” The images are related to the famous Debbie Harry-image Warhol painted on Amiga.

The case is an interesting variation on themes of media art history as well as digital forensics. As Julian Oliver coined it in a tweet:

The media archaeological enters with a realisation of the importance of such methods for the cultural heritage of born-digital content, but there’s more. The non-narrative focus of such methodologies is a different way of accessing what could be thought of as media archaeology of software culture and graphics. The technological tools carry an epistemological, even ontological weight: we see things differently; we are able to access a world previously unseen, also in historical contexts.

But there is a pull towards traditional historical discourses. The project demonstrates a technical understanding of cultural heritage and contemporary software culture but rhetorically frames it as just another part of the art historical/archaeological mythology of rediscovering long lost masterpieces of a Genius. This side still needs some updating so that the technical episteme of the excavation, detailed here [PDF] can become fully realised. Techniques of reverse engineering as well as insights into image formats as ways to understand the technical image need to be matched up with discourse that is able to demonstrate something more than traditional art history by new means. It needs to be able to show what is already at stake in these methods: a historical mapping of the anonymous forces of history, to use words from S. Giedion.

Scholars such as Matt Kirschenbaum have already demonstrated the significant stakes of digital forensics as part of a radical mindset to historical scholarship, heritage and media theory and we need to be able to build on such work that is theoretically rich.